A glimpse into the black box of neural networks

At the NeurIPS* conference in December 2025, researchers from the Vision Institute presented a new method to assess the efficiency with which neural networks, biological or artificial, process information. This "local information model", the result of work in fundamental mathematics inspired by the visual system, offers a new way of understanding the functioning of artificial intelligence but also of our brain.

In the broadest sense, a neuron is a unit of information processing, which transforms input data into an output signal. But it is also a switching system capable of adjusting its connections with other neurons to form a dynamic network. Such a network generates predictions and determines the error in relation to the expected result to adjust its connections and optimize its response, becoming more complex with each learning experience.

Opaque networks in research

In our brains as well as in Artificial Intelligence (AI), these hundreds of billions of dynamic connections form "black boxes". Their complexity is such that it is impossible to accurately assess the parameters that have the greatest influence on their functioning. This interpretability issue, which can mask vulnerabilities, biases or redundancies, is one of the major obstacles to securing and optimizing AI.

Similarly, from a neuroscience perspective, vision is a process that is not fully understood. While researchers are now able to determine how much visual information is transmitted from our retina to the visual cortex, the brain's encoding and processing of information remains too complex to be described with a single model. However, understanding these mechanisms is essential in the development of new therapies to restore vision. For example, it is necessary to check the quality of the signal sent by retinal implants or prostheses to the brain, and to ensure that the brain can interpret it consistently.

While studying neural networks of the visual system, researchers from the Visual information processing team at the Institut de la Vision, led by Olivier Marre, have devised a method for breaking down the information transmitted by neurons, biological or artificial. Steeve Laquitaine, Simone Azeglio, Carlo Paris, Ulisse Ferrari and Matthew Chalk were inspired by the ability of the visual system to select and transmit only the relevant information of a stimulus to identify which components, for example which pixels of an image, have the most influence on its processing.

It all breaks down to information

Until now, the best models for decomposing information in biological or artificial neural networks did not allow for both exhaustive and precise analysis: either the overall volume of information processed was estimated (Shannon's model) or the impact of a single component on the system was estimated (Fisher's model). Getting an overview of the different components and their relationships was not possible in complex neural networks. The work of researchers at the Institut de la Vision solves this impasse by building a mathematical bridge between the two best-known models (Shannon and Fisher) to create a new one called the "local information model".

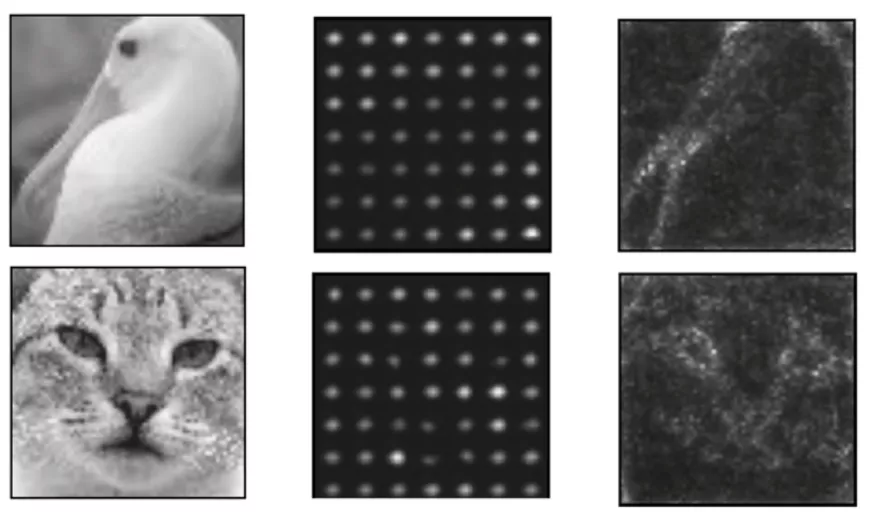

In practice, this new model is a dialogue between two diffusion models, the algorithms behind generative AIs known for their ability to generate images with an element of randomness allowing to create unique visuals. Instead of generating new images, the researchers at the Vision Institute trained a first model to reconstruct an image altered by noise, as if the information were partially obscured by a "fog". The second model follows the same training but with a clue: the electrical signal sent by each neuron in the first model. By comparing the two image reconstructions, it becomes possible to assess how well each of the signals helps to dissipate "fog". The local information model transforms this difference into a score and projects it onto the original image, making it possible to map precisely which parts of the image play a key role in processing the information. Unlike previous methods that produced fuzzy results, this model focuses with great precision on structuring elements such as the edges and contours of objects.

This closely ressemble the way our visual system works : rather than conveying every detail of an image, the retina exploits its redundancies and communicates only spatial and temporal changes and contrasts. Thus, it reduces the flow of data sent to the brain via the optic nerve by a factor of 1000 without losing quality of interpretation. This efficient encoding strategy allow the visual system to analyze complex images with minimal energy, compared to AI.

Towards a new generation of analysis tools?

In the proof of concept presented by researchers from the Institut de la Vision, the local information model was more complete and accurate than the standard model currently used to map information. This advance opens up a field of application that goes far beyond neuroscience, extending to all systems based on neural networks, including AI. In particular, the feedback analysis capacity of the local information model will be used by researchers to compare AI optimization strategies with those of the human retina, paving the way for much more energy-efficient systems.

In the near future, researchers plan to use this type of model to develop digital twins of the human retina. These simulations would provide a framework for testing different therapeutic approaches in silico (by computer), before moving on to clinical trials.

*Link to the NeurIPS Conference : https://neurips.cc/virtual/2025/loc/san-diego/poster/119182